AI agents write emails, summarise meetings, automate workflows, and provide investment advice. And yet, most of them share a fundamental problem: they forget.

Volatile memory, truncated context windows, barely any persistent understanding of a user's evolving intentions or environment. The result? Repetitions, hallucinations, and a loss of perspective on what truly matters.

This is precisely where temporal knowledge graphs come in. Unlike static knowledge graphs, they integrate time as a first-class dimension. They capture not only what happened, but also when – and how relationships evolve over time. Static knowledge becomes a living, evolving memory.

Michael Banf and Johannes Kuhn presented part of this research at Neo4j Nodes '25: "Building Evolving AI Agents Via Dynamic Memory Representations Using Temporal Knowledge Graphs." The talk demonstrated how temporal granularity in knowledge graphs enables applications ranging from personalised recommendations and industrial process monitoring to medical diagnosis assistants.

For us at Perelyn, this work is directly connected to the question of how AI systems become useful in the long run – not just at the moment of a query, but over weeks and months.

The full recording of the talk is available on YouTube.

News

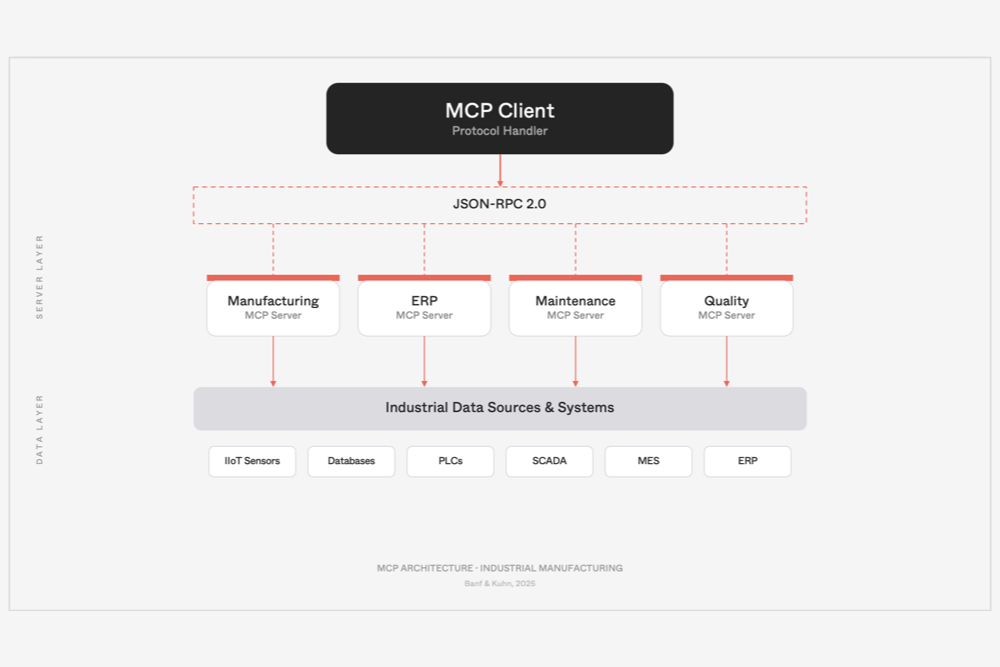

The proceedings from the "AI in Production" workshop at KI2025 have been published. Among the contributions is a paper by our team on the use of the Model Context Protocol (MCP) in industrial production environments.

News

Dominik Filipiak and Michael Banf are co-authors of a community paper on the 2025 Topological Deep Learning Challenge, published in the Proceedings of Machine Learning Research. Their contributions feed into TopoBench, an open benchmarking library for the research community.